1. The different types of… Types in Scala

This blog post came into being after a few discussions about Types in Scala after some of JavaOne’s parties in 2013. After those discussions I figured that many questions are often repeated by different people, during their learning of Scala. I though that we didn’t have a full list of all tricks what we can to with Types in Scala, so I decided to write such a list - giving real life examples why we’d need these types.

2. WORK IN PROGRESS

While I’m working on this article since quite some time, there’s still MUCH to do! For example Higher Kinds need a rewrite, there’s a lot of detail to be added in Self Type’s and lots and lots more. Check the todo file for more.

If you’d like to help, please do! I’ll welcome any pull request, or suggestion (well, I’d prefer pull requests ;-).

Also, if you see a section marked with "✗" it means that it needs re-work or that it’s not complete in some way.

3. Type Ascription

Scala has Type Inference, which means that we can skip telling the Type of something each time in the source code, and instead we just use `val`s or `def`s without "saying the type explicitly in the source". This being explicit about the type of something, is called a Type Ascription (sometimes called a "Type Annotation", but this naming convention can easily cause confusion, and is not what is used in Scala’s spec).

trait Thing

def getThing = new Thing { }

// without Type Ascription, the type is infered to be `Thing`

val infered = getThing

// with Type Ascription

val thing: Thing = getThing

In these situations, leaving out the Type Ascription is OK. Although you may decide to always ascribe return types of public methods (that’s very good idea!) in order to make the code more self-documenting.

In case of doubt you can refer to the below hint-questions to wether or not, include a Type Ascription.

-

Is it a parameter? Yes, you have to.

-

Is it a public method’s return value? Yes, for self-documenting code and control over exported types.

-

Is it a recursive or overloaded methods return value? Yes, you have to.

-

Do you need to return a more general interface than the inferencer would find? Yes, otherwise you’d expose your implementation details for example.

-

Else… No, don’t include a Type Ascription.

-

Related hint: Including Type Ascriptions speeds up compilation, also it’s generally nice to see the return type of a method.

So we put Type Ascriptions after value names. Having this said, let’s jump into the next topics, where these types will become more and more interesting.

4. Unified Type System - Any, AnyRef, AnyVal

We refer to a Scala’s typesystem as being "unified" because there is a "Top Type", Any. This is different than Java, which has "special cases" in form of primitive types (int, long, float, double, byte, char, short, boolean), which do not extend Java’s "Almost-Top Type" - java.lang.Object.

Scala takes on the idea of having one common Top Type for all Types by introducing Any. Any is a supertype of both AnyRef and AnyVal.

AnyRef is the "object world" of Java (and the JVM), it corresponds to java.lang.Object, and is the supertype of all objects. AnyVal on the other hand represents the "value world" of Java, such as int and other JVM primitives.

Thanks to this hierarchy, we’re able to define methods taking Any - thus being compatible with both scala.Int instances as well as java.lang.String:

class Person

val allThings = ArrayBuffer[Any]()

val myInt = 42 // Int, kept as low-level `int` during runtime

allThings += myInt // Int (extends AnyVal)

// has to be boxed (!) -> becomes java.lang.Integer in the collection (!)

allThings += new Person() // Person (extends AnyRef), no magic here

For the Typesystem it’s transparent, though on the JVM level once we get into ArrayBuffer[Any] our Int instances will have to be packed into objects. Let’s investigate the above example’s by using the Scala REPL and it’s :javap command (which shows the generated bytecode for our test class):

35: invokevirtual #47 // Method myInt:()I

38: invokestatic #53 // Method scala/runtime/BoxesRunTime.boxToInteger:(I)Ljava/lang/Integer;

41: invokevirtual #57 // Method scala/collection/mutable/ArrayBuffer.$plus$eq:(Ljava/lang/Object;)Lscala/collection/mutable/ArrayBuffer;You’ll notive that myInt is still carrying the value of a int primitive (this is visible as I at the end of the myInt:()I invokevirtual call). Then, right before adding it to the ArrayBuffer, scalac inserted a call to BoxesRunTime.boxToInteger:(I)Ljava/lang/Integer (a small hint for not frequent bytecode readers, the method it calls is: public Integer boxToInteger(i: int)). This way, by having a smart compiler and treating everything as an object in this common hierarchy we’re able to get away from the "but primitives are different" edge-cases, at least at the level of our Scala source code - the compiler takes care of it for us. On JVM level, the distinction is still there of course, and scalac will do it’s best to keep using primitives wherever possible, as operations on them are faster, and take less memory (objects are obviously bigger than primitives).

On the other hand, we can limit a method to only be able to work on "lightweight" Value Types:

def check(in: AnyVal) = ()

check(42) // Int -> AnyVal

check(13.37) // Double -> AnyVal

check(new Object) // -> AnyRef = fails to compile

In the above example I’ve used a TypeClass Checker[T] and a type bound, which will be discussed below. The general idea is that this method will only take Value Classes, be it Int or our own Value Type. While probably not used very often, it shows how nicely the typesystem embraces java primitives, and brings them into the "real" type system, and not as a separate case, as is the case with Java.

5. The Bottom Types - Nothing and Null

In Scala everything has "some" type… but have you ever wondered how the type inferencer can still work, and infer sound types when working with "weird" situations like throwing exceptions? Let’s investigate the below "if/else throw" example:

val thing: Int =

if (test)

42 // : Int

else

throw new Exception("Whoops!") // : Nothing

As you can see in the comments, the type of the if block is Int (easy), the type of the else block is Nothing (interesting). The inferencer was able to infer that the thing value, will only ever be of type Int. This is because of the Bottom Type property of Nothing.

A very nice intuition about how bottom types work is: "Nothing extends everything."

|

Type inference always looks for the "common type" of both branches in an if stamement, so if the other branch here is a Type that extends everything, the infered type will automatically be the Type from the first branch.

Types visualized:

[Int] -> ... -> AnyVal -> Any

Nothing -> [Int] -> ... -> AnyVal -> Any

The same reasoning can be applied to the second Bottom Type in Scala - Null.

val thing: String =

if (test)

"Yay!" // : String

else

null // : Null

The type of thing is as expected, String. Null follows ALMOST the same rules as Nothing. I’ll use this case to take a small detour to talk about inference, and the differences between AnyVals and AnyRefs.

Types visualized:

[String] -> AnyRef -> Any

Null -> [String] -> AnyRef -> Any

infered type: StringLet’s think about Int and other primitives, which cannot hold null values. To investigate this case let’s drop into the REPL and use the :type command (which allows to get the type of an expression):

scala> :type if (false) 23 else null

AnyThis is different than the case with a String object in one of the branches. Let’s look into the types in detail here, as Null is a bit less "extends everything" than Nothing. Let’s see what Int extends in detail, by using :type again on it:

scala> :type -v 12

// Type signature

Int

// Internal Type structure

TypeRef(TypeSymbol(final abstract class Int extends AnyVal))The verbose option adds a bit more information here, now we know that Int is an AnyVal - this is a special class representing value types - which cannot hold Null. If we look into AnyVal’s sources, we’ll find:

abstract class AnyVal extends Any with NotNull

I’m bringing this up here because the core functionality of AnyVal is so nicely represented using the Types here. Notice the NotNull trait!

Coming back to the subject why the common Type for our if statement with an AnyVal on one code block and a null on the other one was Any and not something else. The one sentence way to define it is: Null extends all AnyRefs whereas Nothing extends anything. As AnyVals (such as numbers), are not in the same tree as AnyRefs, the only common Type between a number and a null value is Any - which explains our case.

Types visualized:

Int -> NotNull -> AnyVal -> [Any]

Null -> AnyRef -> [Any]

infered type: Any

6. Type of an object

Scala `object`s are implemented via classes (obviously - as it’s the basic building block on the JVM), but you’ll notice that we cannot get its type the same way as we would with an simple class…

I would surprisingly often get the question on how to pass an object into a method. Just saying obj: ExampleObj won’t work

because that’s already referring to the instance, so there’s a member called type which should be used in such cases.

How it might look like in your code is explained by the below example:

object ExampleObj

def takeAnObject(obj: ExampleObj.type) = {}

takeAnObject(ExampleObj)

7. Type Variance in Scala

Variance, in general, can be explained as "type compatible-ness" between types, forming an extends relation.

The most popular cases where you’ll have to deal with this is when working with containers or functions (so… surprisingly often!).

A major difference from Java in Scala is, that container types are not-variant by default!

This means that if you have a container defined as Box[A], and then use it with a Fruit in place

of the type parameter A, you will not be able to insert an Apple (which IS-A Fruit) into it.

Variance in Scala is defined by using + and - signs in front of type parameters.

| Name | Description | Scala Syntax |

|---|---|---|

Invariant |

C[T'] and C[T] are not related |

C[T] |

Covariant |

C[T'] is a subclass of C[T] |

C[+T] |

Contravariant |

C[T] is a subclass of C[T'] |

C[-T] |

The above table ilustrates all variance cases we’ll have to worry about - in an abstract way. In case you’re wondering where you’d have to care about this - in fact, you’re exposed to this each time you’re working with collections you’re being faced with the question "is it covariant?".

| Most immutable collections are covariant, and most mutable collections are invariant. |

There are (at least) two nice and very intuitive examples of this in Scala. One being "any collection", where we’ll use a List[+A] as our example, and functions. When talking about List in Scala, we usually mean scala.collection.immutable.List[+A], which is immutable as well as covariant, let’s look how this relates to building lists containing items of different types.

class Fruit

case class Apple() extends Fruit

case class Orange() extends Fruit

val l1: List[Apple] = Apple() :: Nil

val l2: List[Fruit] = Orange() :: l1

// and also, it's safe to prepend with "anything",

// as we're building a new list - not modifying the previous instance

val l3: List[AnyRef] = "" :: l2

It’s worth mentioning that while having immutable collections co-variant is safe, the same cannot be said about mutable collections. The classic example here is Array[T] which is invariant. Let’s look at what invariance means for us here, and how it saves us from mistakes:

// won't compile

val a: Array[Any] = Array[Int](1, 2, 3)

Such an assigment won’t compile, because of Array’s invariance. If this assignment would be valid, we’d run into the problem of being able to write such code: a(0) = "" // ArrayStoreException! which would fail with the dreaded ArrayStoreException.

We said that "most" immutable collections are covariant in scala. In case you’re curious, one example of an immutable collection which stands out from that, and is invariant, it’s Set[A].

|

7.1. Traits, as in "interfaces with implementation"

First, let’s take a look as the simplest thing possible about traits: how we can basically treat a type with multiple traits mixed in, as if it is implementing these "interfaces with implementation", as you might be tempted to call them if comming from Java-land:

class Base { def b = "" }

trait Cool { def c = "" }

trait Awesome { def a ="" }

class BA extends Base with Awesome

class BC extends Base with Cool

// as you might expect, you can upcast these instances into any of the traits they've mixed-in

val ba: BA = new BA

val bc: Base with Cool = new BC

val b1: Base = ba

val b2: Base = bc

ba.a

bc.c

b1.b

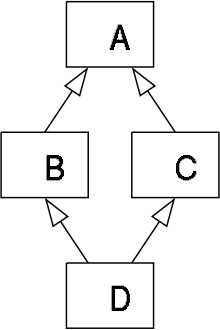

So far this should have been relatively well known to you. Now let’s to into the world of the "diamond problem", which people who know C++ might have been expecting. Basically "The Diamond Problem" is a situation during multiple inheritance where we’re not sure to what we want to refer to. The below image ilustrates the problem, if you would think of traits as if they were directly multiple inheritance:

7.2. Type Linearization vs. The Diamond Problem

For the "diamond problem" to appear, it’s enough if we have one overriding implementation in B or/and C. This introduces an ambiguity when calling the common method in D, did we inherit the version of the method from C or from B? In Scala’s case the case with only one overriding method is very simple - the override wins. But let’s work through the more complex case:

-

class

Adefines a methodcommonreturninga, -

trait

BDOES overridecommonto returnb, -

trait

CDOES overridecommonto returnc, -

class

Dextends bothBandC, -

which version of the

commonmethod does classDinherit? The overriden impementation fromC, or the one fromB?

This ambiguity is a pain point of every multiple-inheritance-like mechanism. Scala solves this problem by so called Type Linearization.

In other words, given a diamond class hierarchy, we are always (and deterministically) able to determine what will be called when inside D we call common.

Let’s put this into code and then talk about linearization:

trait A { def common = "A" }

trait B extends A { override def common = "B" }

trait C extends A { override def common = "C" }

class D1 extends B with C

class D2 extends C with B

The results will be as follows:

(new D1).common == "C"

(new D2).common == "B"

The reason for this is that Scala applied the type linearization for us here. The algorithm goes like this:

-

start building a list of types, the first element is the type we’re linearizing right now,

-

expand each supertype recursively and put all their types into this list (it should be flat, not nested),

-

remove duplicates from the resulting list, by scanning it from the left, and removing a type that you’ve already "seen"

-

done.

Let’s apply this algorithm on our diamond example by hand, to verify why D1 extends B with C (and D2 extends C with B) yielded the results they did:

// start with D1:

B with C with <D1>

// expand all the types until you rach Any for all of them:

(Any with AnyRef with A with B) with (Any with AnyRef with A with C) with <D1>

// remove duplicates by removing "already seen" types, when moving left-to-right:

(Any with AnyRef with A with B) with ( C) with <D1>

// write the resulting type nicely:

Any with AnyRef with A with B with C with <D1>

So when calling the common method, it’s now very simple to decide which version we want to call: we simply look at the linearized type,

and try to resolve the method by going from the right in the resulting linearized type. In the case of D1, the "rightmost" trait providing an implementation of common is C, so it’s overriding the implementation provided by B. The result of calling common inside D1 would be "c".

You can wrap your head around this method by trying it out on the D2 class - it should linearize with B after C, thus yielding a "b" if you’d run the code.

Also it’s rather easy to resolve the simpler cases of linearization by just thinking "rightmost wins", but this is quite a simplification which, while helpful, does not give the full picture about the algorithm.

It is worth mentioning that using this technique we also know "who is my super?". It’s as easy as "looking left" in the linearized type, from wherever class you want to check who your superclass is. So for example in our case (D1), the superclass of C is B.

8. Refined Types (refinements)

Refinements are very easy to explain as "subclassing without naming the subclass". So in source code it would look like this:

class Entity

trait Persister {

def doPersist(e: Entity) = {

e.persistForReal()

}

}

// our refined instance (and type):

val refinedMockPersister = new Persister {

override def doPersist(e: Entity) = ()

}

9. Package Object

Package Objects have been added to Scala in 2.8 Package Object and do not really extend the type system as such, but they provide an pretty useful pattern for "importing a bunch of stuff together" as well as being one of the places the compiler will look for implicit values.

Declaring a package object is as simple as using the keywords package and object in conjunction, such as:

// src/main/scala/com/garden/apples/package.scala

package com.garden

package object apples extends RedApples with GreenApples {

val redApples = List(red1, red2)

val greenApples = List(green1, green2)

}

trait RedApples {

val red1, red2 = "red"

}

trait GreenApples {

val green1, green2 = "green"

}It’s a custom to put package object’s in a file called package.scala into the package they’re the object for. Investigate the above example’s

source path and package to see what that means.

On the usage side, you get the real gains, because when you import "the package", you import any state that is defined in the package side along with it:

import com.garden.apples._

redApples foreach println

10. Type Alias

It’s not really another kind of type, but a trick we can use to make our code more readable:

type User = String

type Age = Int

val data: Map[User, Age] = Map.empty

Using this trick the Map definition now suddenly "makes sense!". If we’d just used a String => Int map,

we’d make the code less readable. Here we can keep using our primitives (maybe we need this for performance etc),

but name them so it makes sense for the future reader of this class.

Note that when you create an alias for a class, you do not alias its companion object in tandem. For example,

assuming you’ve got case class Person(name: String) and an alias type User = Person, calling User("John") will

result in an error, as Person("John") (what we could expect to be effectively called here) implicitly invokes

apply method from Person companion object that is not aliased in this case.

|

11. Abstract Type Member

Let’s now go deeper into the use cases of Type Aliases, which we call Abstract Type Members.

With Abstract Type Members we say "I expect someone to tell me about some type - I’ll refer to it by the name MyType".

It’s most basic function is allowing us to define generic classes (templates), but instead of using the class Clazz[A, B] syntax, we name them inside the class, like this:

trait SimplestContainer {

type A // Abstract Type Member

def value: A

}

Which for Java folks may seem very similar to the Container<A> syntax at first, but it’s a bit more powerful as we’ll see in the section about Path Dependent Types, as well as in the below example.

It is important to notice that while in the naming contains the word "abstract", it does not behave exactly like an abstract field - so you can still create a new instance of SimplestContainer without "implementing" the type member A:

new SimplestContainer // valid, but A is "anything"

You might be wondering what type A was bound to, since we didn’t provide any information about it anywhere.

Turns out type A is actualy just a shorthand for type A >: Nothing <: Any, which means "anything".

object IntContainer extends SimplestContainer {

type A = Int

def value = 42

}

So we "provide the type" using a Type Alias, and now we can implement the value method which returns an Int.

The more interesting uses of Abstract Type Members start when we apply constraints to them. For example imagine you want to have a container that can only store anything that is of a Number instance. Such constraint can be annotated on a type member right where we defined it first:

trait OnlyNumbersContainer {

type A <: Number

def value: A

}

Or we can add constraints later on in the class hierarchy, for example by mixing in a trait that states "only Numbers":

trait SimpleContainer {

type A

def value: A

}

trait OnlyNumbers {

type A <: Number

}

val ints = new SimpleContainer with OnlyNumbers {

def value = 12

}

// bellow won't compile

val _ = new SimpleContainer with OnlyNumbers {

def value = "" // error: type mismatch; found: String(""); required: this.A

}

So, as you can see we can use Abstract Type members, in similar situations like we use Type Parameters, but without the pain of having to pass them around explicitly all the time - the passing around happens because it is a field. The price paid here though is that we bind those types by-name.

12. Self-Recursive Type

Self-recursive Types are referred to as F-Bounded Types in most literature, so you may find many articles or blog posts refering to "F-bounded". Actually, this is just another name for "self-recursive" and stands for situations where the subtype constraint itself is parametrized by one of the binders occurring on the left-hand side. Since the wording self-recursive is more intuitive, let’s stick to it for the rest of the section (while the subsection title being aimed for people who try to google what "F-bounded" means).

12.1. F-Bounded Type

While this not being a Scala specific type, it still sometimes raises a few eyebrows. One example of a self-recursive type many of us are (perhaps unknowingly) familiar with, is Java’s Enum<E>, if you’re curious about it, check out the Enum sources from Java. But now back to Scala and first let’s see what we’re actually talking about.

| For this section we will not dive deep very deep into this type. If you’re curious for a more in-depth use-case in Scala, you may look into Kris Nuttycombe’s F-Bounded Type Polymorphism Considered Tricky. |

Imagine you have some Fruit trait, and both an Apple and an Orange extend it. The Fruit trait also has an "compareTo" method, and here the problem comes up: imagine you’d want to say "I can’t compare oranges with apples, they’re totally different things!". First let’s look at how we loose this compile-time safety with the naive implementation:

// naive impl, Fruit is NOT self-recursively parameterised

trait Fruit {

final def compareTo(other: Fruit): Boolean = true // impl doesn't matter in our example, we care about compile-time

}

class Apple extends Fruit

class Orange extends Fruit

val apple = new Apple()

val orange = new Orange()

apple compareTo orange // compiles, but we want to make this NOT compile!

So in the naive implementation, since the trait Fruit has no clue about the types extending it, so it’s not possible to restrict the compareTo signature to only allow "the same subclass as `this`" in the parameter. Let’s now rewrite this example to use an Self Recursive Type Parameter:

trait Fruit[T <: Fruit[T]] {

final def compareTo(other: Fruit[T]): Boolean = true // impl doesn't matter in our example

}

class Apple extends Fruit[Apple]

class Orange extends Fruit[Orange]

val apple = new Apple

val orange = new Orange

Notice the Type Parameter in Fruit’s signature. You could read it as "I take some T, that T must be a Fruit[T]", and the only way to satisfy such bounds is by extending this trait as we do with Apple and Orange now. Now if we’d try comparing apple to orange we’ll get a compile time error:

scala> orange compareTo apple

:13: error: type mismatch;

found : Apple

required: Fruit[Orange]

orange compareTo apple

scala> orange compareTo orange

res1: Boolean = true So now we’re sure we’ll only ever compare apples with apples, and other Fruit with the same kind (sub-class) of Fruit. There’s more to discuss here though - what about subclasses of Apple and Orange? Well, because we "filled-in" the type parameter at Apple / Orange level in the type hierarchy, we basically said "apples can only be compared to apples", which means that sub-classes of apples, can be compared with each other - Fruit’s signature of compareTo will still be happy, because the right hand side of our call would be some Fruit[Apple] — only a bit more concrete, for example let’s try this with a japanese apple (ja. "りんご", "ringo") and a polish apple (pl. "Jabłuszko"):

object `りんご` extends Apple

object Jabłuszko extends Apple

`りんご` compareTo Jabłuszko

// true

| You could get the same type-safety using more fancy tricks, like path dependent types or implicit parameters and type classes. But the simplest thing that does-the-job here would be this. |

13. Type Constructor ✗

Type Constructors act pretty much like functions, but on the type level.

That is, if in normal programming you can have a function that takes a value a and returns a value b based on the previous one, then in type-level programming you’d think of a List[+A] being a type constructor, that is:

-

List[+A]takes a type parameter (A), -

by itself it’s not a valid type, you need to fill in the

Asomehow - "construct the type", -

by filling it in with

Intyou’d getList[Int]which is a concrete type.

Using this example, you can see how similar it is to normal constructors - with the only difference that here we work on types, and not instances of objects. It’s worth reminding here that in Scala it is not valid to say something is of type List, unlike in Java where javac would put the List<Object> for you. Scala is more strict here, and won’t allow us to use just a List in the place of a type, as it’s expecting a real type - not a type constructor.

Related to this subject is that with Scala 2.11.x we’re getting a new power user command in the REPL - the :kind command. It allows you to check if a type is higher kind or not. Let’s check it our on a simple type constructor, such as List[+A] first:

// Welcome to Scala version 2.11.0-M5 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0-ea).

// Type in expressions to have them evaluated.

:kind List

// scala.collection.immutable.List's kind is F[+A]

:kind -v List

// scala.collection.immutable.List's kind is F[+A]

// * -(+)-> *

// This is a type constructor: a 1st-order-kinded type.

Here we see that scalac is able to tell us that List, in fact, is a type constructor (it’s way more talkative when used with the -verbose option). Let's investigate the syntax right above this information: `* -> *. This syntax is widely used to represent kinds, and actually I found it quite Haskell inspired - as this is the syntax Haskell uses to print types of functions. The most intuitive way to read it out loud would be "takes one type, returns another type". You might have noticed that we’ve omitted something from Scala’s exact output, the plus sign from the relation (as in:* -(+)-> *), this means variance bounds and you can read up in detail about variance in section Type Variance in Scala.

As already mentioned, List[+A] (or Option[+A], or Set[+A]… or anything that has one type parameter) is the simplest case of a type constructor - these take one parameter.

We refer to them as first-order kinds (* -> *). It’s also worth mentioning that even a Pair[+A, +B] (which we can represent as * -> * -> *) is still not a "higher-order kind" - it’s still first-order. In the next section we’ll dissect what exactly higher kinds give us and how to notice one.

14. Higher-Order Kind ✗

| TODO nothing here yet, coming soon… |

Higher Kinds on the other hand, allow us to abstract over type constructors, just like type constructors allow us to abstract over the type they.

A classic example here is a Monad:

scala> import scalaz._

import scalaz._

scala> :k Monad // Finds locally imported types.

Monad's kind is (* -> *) -> *

This is a type constructor that takes type constructor(s): a higher-kinded type.15. Case Class

Case Classes are one of the most useful nifty little compiler tricks available in Scala.

While not being very complicated, they help a lot with otherwise very tedious and boring things such as equals, hashCode and toString implementations, and also preparing apply / unapply methods in order to be used with pattern matching, and more.

A case class is defined just like a normal class in Scala, but prepended with the case keyword:

case class Circle(radius: Double)

Just by this one line, we have already implemented the Value Object pattern. This means that by defining a case class we automatically get these benefits:

-

instances of it are immutable,

-

can be compared using

equals, and equality is defined by it’s fields (NOT object identity like it would be the case with a normal class), -

it’s

hashcodeadheres theequalscontract, and is based on the values of the class, -

the

radiusconstructor parameter is apublic val -

it’s

toStringis composed of the class name and values of the fields it contains (for our Circle it would be implemented asdef toString = s"Circle($radius)").

Let’s see what we got so far, and expand on this using a "real life" example, this time implementing a Point class, because we’ll beed more than just one field to show off some interesting features case class provides us with:

case class Point(x: Int, y: Int) (1)

val a = Point(0, 0) (2)

// a.toString == "Point(0,0)" (3)

val b = a.copy(y = 10) (4)

// b.toString == "Point(0,10)"

a == Point(0, 0) (5)| 1 | x and y are automatically defined as val members |

| 2 | a Point companion object is generated for it, with an apply(x: Int, y: Int) method which we can use to create instances using this syntax |

| 3 | the generated toString method consists of the classname and case class parameter values |

| 4 | the copy(...) method allows to easily create derrative objects, by changing only selected fields |

| 5 | equality of case classes is value based (equals and hashCode implementations based on the case class parameters are generated) |

Not only that, but a case class is ready to be used with pattern matching, using either the "usual" or "extractor" syntax:

Circle(2.5) match {

case Circle(r) => println("Radius = " + r)

}

val Circle(r)

val r2 = r + r

16. Enumeration

Scala does not have a build in "enum" like Java does. Instead we use a few tricks (embedded in Enumeration) to write something pretty similar.

16.1. Enumeration

The current (2.10.x) way of implementing an enum-like structure is by using the Enumeration class that comes with Scala:

object Main extends App {

object WeekDay extends Enumeration { (1)

type WeekDay = Value (2)

val Mon, Tue, Wed, Thu, Fri, Sat, Sun = Value (3)

}

import WeekDay._ (4)

def isWorkingDay(d: WeekDay) = ! (d == Sat || d == Sun)

WeekDay.values filter isWorkingDay foreach println (5)

}| 1 | First we declare an object that will hold our enumeration values, it has to extend Enumeration. |

| 2 | Here we define an Type Alias for Enumerations internal Value type, since we make the name match

the object’s name, we’ll be able to refer to it consistently via "WeekDay" (yes, this is pretty much a hack) |

| 3 | Here we use "multi assignment", so every val on the left-hand side gets assigned a different instance of Value. You could have written this as 7 val’s. |

| 4 | This import causes two things, first we can refer to Mon without prefixing it with WeekDay, but it also brings the type WeekDay into scope, so we can use it in the method definition bellow |

| 5 | Lastly, we get some Enumeration methods. These are not really magic, most action happend when we create new Value instances |

As you can see, it’s actually not a built-in and is implemented by smartly using the Scala type system to make it look like an enum. For some uses this may be enough but it’s not as rich as the Java enum, where adding values and behavior to each case is possible.

16.2. @enum

The @enum annotation is currently only a proposal, and is being discussed on scala-internals in the thread: enumeration must die.

|

Together with annotation macros which are coming in the future, we may be getting the @enum annotation, which is descibed somewhat in the related Scala Improvement Process document: [enum-sip].

@enum

class Day {

Monday { def goodDay = false }

Tuesday { def goodDay = false }

Wednesday { def goodDay = false }

Thursday { def goodDay = false }

Friday { def goodDay = true }

def goodDay: Boolean

}

17. Value Class

Value classes have been around in Scala for a long time internally, and you’ve used them already many times because all Number’s in Scala use this compiler trick to avoid boxing and unboxing numeric values from int to scala.Int etc. As a quick reminder, let’s recall that Array[Int] is an actual JVM int[] (or for bytecode happy people, it’s the JVM runtime type called: [I) which has tons of performance implications, but in one word — arrays of numbers are fast, arrays of references not as much.

Ok, since we now know the compiler has fancy tricks to avoid boxing ints into Ints when it doesn’t have to. Let’s see how this feature is exposed for us, end users since Scala 2.10.x. The feature is called "value classes", is fairly simple to apply to your existing classes. Using them is as simple as adding extends AnyVal to your class and following a few rules listed bellow. If you’re not familiar with AnyVal, this might be a good moment for a quick refresher by looking at Section Unified Type System - Any, AnyRef, AnyVal.

For our example let’s implement a Meter which will serve as wrapper for plain Int and be able to convert the number of meters, into the number of type Foot. We need this class because no-one understands the imperial unit system ;-) On the downside though, why should we pay the runtime overhead of having an object around an int (that’s quite a few bytes (!) per instance) if for 95% of the time we’ll be using the plain meter value - because it’s a project for the european market? Value classes to the rescue!

case class Meter(value: Double) extends AnyVal {

def toFeet: Foot = Foot(value * 0.3048)

}

case class Foot(value: Double) extends AnyVal {

def toMeter: Meter = Meter(value / 0.3048)

}

We’ll be using Case (Value) Classes in all our examples here, but it’s not technically required to do so (although very convinient). You could implement a Value Class using a normal class with one val parameter instead, but using case classes is usually the best way to go. Why only one parameter you might ask — this is because we’ll try to avoid wrapping the value, and this only makes sense for single values, otherwise we’d have to keep a Tuple around somewhere, which gets fuzzy very quickly and we’d loose the performance of not-wrapping anyway. So remember - value classes work only for 1 value, although no-one said that that parameter must be a primitive (!), it can be a normal class, like Fruit or Person, we’ll still be able to avoid wrapping it in the Value Class at some times.

All you need to do to define a Value Class is to have a class with only one public val parameter extending AnyVal, and follow a few restrictions around it. That one parameter does not have to be a primitive, it can be anything. The restrictions (or limitations) on the other hand are a longer list, as for example a value class cannot contain any other fields than def members and cannot be extended etc. For a full list and more in-depth examples refer to the Scala documentation’s Value Classes - summary of limitations.

|

Ok, so now that we got our Meter and Foot Value Case Classes, let’s first examine how the generated bytecode has changes from a normal case class when we added the extends AnyVal part, making Meter a value class:

// case class

scala> :javap Meter

public class Meter extends java.lang.Object implements scala.Product,scala.Serializable{

public double value();

public Foot toFeet();

// ...

}

scala> :javap Meter$

public class Meter$ extends scala.runtime.AbstractFunction1 implements scala.Serializable{

// ... (skipping not interesting in this use-case methods)

}

And the bytecode generated for the value class bellow:

// case value class

scala> :javap Meter

public final class Meter extends java.lang.Object implements scala.Product,scala.Serializable{

public double value();

public Foot toFeet();

// ...

}

scala> :javap Meter$

public class Meter$ extends scala.runtime.AbstractFunction1 implements scala.Serializable{

public final Foot toFeet$extension(double);

// ...

}

There’s basically one thing that should catch our attention here, it’s that the Meter’s companion class when created as a Value Class, has gained a new method - toFeet$extension(double): Foot. Before this method was an instance method, of the Meter class, and it did not take any arguments (so it was: toFeet(): Foot). The generated method is marked as "extension", and this is actualy exactly the name we give to such methods (.NET developers might see where this is headed already).

As our goal with Value Classes is to avoid having to allocate the entire value object, and instead work directly on the wrapped value we have to stop using instance methods — as they would force us into having an instance of the Wrapper (Meter) class. What we can do instead is promoting the instance method, into an extension method, which we’ll store in the companion object of Meter, and instead of using the value: Double field of the instance, we’ll pass in the Double value each time we’ll be calling the extension method.

Extension methods serve the same purpose as Implicit conversions (which are a more general and more powerful utility), yet are better than conversions in one simple way — they avoid having to allocate the "Wrapper" object, which implicit conversions would otherwise use to provide the "added methods". Extension methods take the route of rewriting the generated methods a little, so that they take the type-to-be-extended as their 1st argument. So for example, if you write 3.toHexString this method is added to Int via an implicit conversion, but as the target is class RichInt extends AnyVal, so a Value Class, the call does not force an allocation of RichInt, and instead will be rewriten into RichInt$.$MODULE$.toHexString$extension(3), thus avoiding the allocation of RichInt.

|

Let’s now use our newly gained knowlage and investigate what the compiler will actualy do for us in the Meter example. Right next to the source code "as we write it", the comments will be explaining what the compiler actualy generates (thus, what happens when we run this code):

// source code // what the emited bytecode actualy does

val m: Meter = Meter(12.0) // store 12.0 (1)

val d: Double = m.value * 2 // double multiply (12.0 * 2.0), store (2)

val f: Foot = m.toFeet // call Meter$.$MODULE$.toFeet$extension(12.0) (3)| 1 | One might expect an allocation of a Meter object here, but as we’re using a Value Class, only the wrapped value gets stored - that is we’re working a plain double from here on, during runtime (assignment and typechecking still verifies "as if" this was a Meter instance) |

| 2 | Here we access the value of the Value Class (name of the field does not matter). Notice that the runtime operates on raw doubles here, and thus, does not have to call an value method, like it would have usually if we’d use a plain case class. |

| 3 | Here we seem to be calling an instance method, defined on Meter, but in fact, the compiler has substituted this call with an extension method call, where it passes in the 12.0 value. We get back a Foot instance… oh wait! but Foot was also defined as Value Class, so in runtime we’d again get back a plain double! Source-code-wise we don’t have to care though - it’s nice if we get the performance gain from using a Value Class, but it does not affect our code in line 72 in any way. |

These are the basics around extension methods and value classes. If you want to read more about the different edge-cases around them, please refer to the official documentaion’s section about Value Classes where Mark Harrah, explains them very well with tons of examples, so I won’t be duplicating his effort here beyond the basic introduction :-)

18. Type Class ✗

Type Classes belong to the most powerful patterns available to use in Scala, and can be summed up (if you like fancy wording) as "ad-hoc polimorphism" which should be understandable once we get to the end of this section.

The typical problem Type Classes solve for us is providing extensible APIs without explicitly binding two classes together.

An example of such a strict binding, we would be able to avoid using Type Classes, is for example extending a Writable interface,

in order to make our custom data type writable:

// no type classes yet

trait Writable[Out] {

def write: Out

}

case class Num(a: Int, b: Int) extends Writable[Json] {

def write = Json.toJson(this)

}

Using this style, of just extending and implementing an interface, we have bound our Num to Writable and also, we had to provide the implementation for write, "right there, right now", which makes it harder for others to provide a different write implementation - they would have to subclass Num! Another pain point here is that we cannot extend two times from the same trait, providing different serialization targets (you can’t both extend Writable[Json] and Writable[Protobuf]).

All these problems can be addressed by using a Type Class based approach instead of directly extending Writable[Out]. Let’s give it a shot, and explain in detail how this is actually working:

trait Writes[In, Out] { (1)

def write(in: In): Out

}

trait Writable[Self] { (2)

def write[Out]()(implicit writes: Writes[Self, Out]): Out =

writes write this

}

implicit val jsonNum = Writes[Num, Json] { (3)

def (n1: Num, n2: Num) = n1.a < n1.

}

case class Num(a: Int) extends Writable[Num]| 1 | First we define out Type Class, it’s API is similar to the previous Writable trait we, but instead of mixing it into a class that will be written, we will keep it separate, and in oder to know what it’s defined for we use the Self type-parameter |

| 2 | Next we change our Writable trait to be parameterized with Self and the target serialization type is moved onto the signature of write. It also now requires an implicit Writes[Self, Out] implementation, which handles the serializing - that’s how our Type Class. |

| 3 | This is the actual implementation of the Type Class, notice that we mark the instance as implicit, so it’s available for the write()(implicit Writes[_, _]) method

== Universal Trait ✗ |

Universal traits are traits that extend Any, they should only have `def`s, and no initialization code.

TODO TODO

| TODO IMPLEMENT DOCS :-) |

19. Self Type Annotation

Self Types are used in order to "require" that, if another class uses this trait, it should also provide implementation of whatever it is that you’re requiring.

Let’s look at an example where a service requires some Module which provides other services. We can state this using the following Self Type Annotation:

trait Module {

lazy val serviceInModule = new ServiceInModule

}

trait Service {

this: Module =>

def doTheThings() = serviceInModule.doTheThings()

}

The second line can be read as "I’m a Module". It might seem yield the exactly same But how does this differ from extending Module right away?

which means that someone will have to give us this Module at instantiation time:

trait TestingModule extends Module { /*...*/ }

new Service with TestingModule

If you were to try to instantiate it without mixing in the required trait it would fail like this:

new Service {}

// class Service cannot be instantiated because it does not conform to its self-type Service with Module

// new Service {}

// ^

You should also keep in mind that it’s OK to specify more than one trait when using the self-type syntax. And while we’re at it, let’s discuss why it is called self-type (except for the "yeah, it makes sense" factor). Turns out a popular style (and possibility) to write it looks like this:

class Service {

self: MongoModule with APIModule =>

def delegated = self.doTheThings()

}

In fact, you can use any identifier (not just this or self) and then refer to it from your class.

20. Phantom Type

Phantom Types are very true to it’s name even if it’s a weird one, and can be explained as "Types that are not instantiate, ever". Instead of using them directly, we use them to even more strictly enforce some logic, using our types.

The example we’ll look at is a Service class, which has start and stop methods. Now we want to guarantee that you cannot (the type system won’t allow you to) start an already started service, and vice-versa.

Let’s start with preparing our "marker traits", which don’t contain any logic - we will only use them in order to express the state of an service in it’s type:

sealed trait ServiceState

final class Started extends ServiceState

final class Stopped extends ServiceState

Note that making the ServiceState trait sealed assures that no-one can suddenly add another state to our system.

We also define the leaf types here to be final, so no-one can extend them, and add other states to the system.

|

The

Note that this applies directly to the Type the keyword was applied to, not to it’s subtypes.

So you cannot extend State from other files, but if you prepare a type like |

Having those prepared, let’s finally dive in into using them as Phantom Types.

First let’s define a class Service, which takes State type parameter - notice here that we don’t use any value of type S in this class_!

It’s just there, unused, like a ghost, or Phantom - which is where it’s name comes from.

class Service[State <: ServiceState] private () {

def start[T >: State <: Stopped]() = this.asInstanceOf[Service[Started]]

def stop[T >: State <: Started]() = this.asInstanceOf[Service[Stopped]]

}

object Service {

def create() = new Service[Stopped]

}

So in the companion object we create a new instance of the Service, at first it is Stopped.

The Stopped state conforms to the type bounds of the type parameter (<: ServiceState) so it’s all good.

The interesting things happen when we want to start / stop an existing Service. The Type bounds defined on the start method for example,

are only valid for one value of T, which is Stopped. The transistion to the opposite state is a no-op in our example, we return the same instance,

and explicitly cast it into the required state. Since nothing is actualy using this type, you won’t bump into class cast exceptions during this conversion.

Let’s now investigate the above code using the REPL, which will act as a nice finisher for this section.

scala> val initiallyStopped = Service.create() (1)

initiallyStopped: Service[Stopped] = Service@337688d3

scala> val started = initiallyStopped.start() (2)

started: Service[Started] = Service@337688d3

scala> val stopped = started.stop() (3)

stopped: Service[Stopped] = Service@337688d3

scala> stopped.stop() (4)

<console>:16: error: inferred type arguments [Stopped] do not conform to method stop's

type parameter bounds [T >: Stopped <: Started]

stopped.stop()

^

scala> started.start() (5)

<console>:15: error: inferred type arguments [Started] do not conform to method start's

type parameter bounds [T >: Started <: Stopped]

started.start()| 1 | Here we create the initial instance, it starts in Stopped state |

| 2 | Starting a Stopped service is OK, returned type is Service[Started] |

| 3 | Stopping a Started service is OK, returned type is Service[Stopped] |

| 4 | However stopping an already stopped service (Service[Stopped]) is not valid, and will not compile. Notice the printed type bounds! |

| 5 | Similarly, starting an already started service (Service[Started]) is not valid, and will not compile. Notice the printed type bounds! |

As you see, Phantom Types are yet another great facility to make our code even more type-safe (or shall I say "state-safe"!?).

If you’re curious where these things are used in "not very crazy libraries", a great example here is Foursquare Rogue (the MongoDB query DSL) where it’s used to assure a query builder is in the right state - for example that limit(3) was called on it.

|

21. Structural Type

Strucural Types are often described as "type-safe duck typing", which is quite a good comparison if you want to gain some intuition for it.

So far we’ve only been thinking about types in terms of "does it implement interface X?". With structural types we can go a step further and start reasoning about the structure of a given object (hence the name). When checking whether a type matches using structual typing, we need to change our question to:"does it have a method with this signature?".

Let’s look at a very popular use-case in action, to see why it is so powerful. Imagine that you have many classes of things that can be closed. In Java-land one would usually implement the java.io.Closeable interface in order to make it possible to write some common Closeable utility classes (in fact, Google Guava provides such a utility class). Now imagine that someone also implemented a MyOwnCloseable class but didn’t extend java.io.Closeable. Your Closeables library would not work here due to the static typing. You would not be able to pass instances of MyOwnCloseable into it. Let’s solve this problem using Structural Typing:

type JavaCloseable = java.io.Closeable

// reminder, it's body is: { def close(): Unit }

class MyOwnCloseable {

def close(): Unit = ()

}

// method taking a Structural Type

def closeQuietly(closeable: { def close(): Unit }) =

try {

closeable.close()

} catch {

case ex: Exception => // ignore...

}

// accepts a java.io.File (implements Closeable):

closeQuietly(new StringReader("example"))

// accepts a MyOwnCloseable

closeQuietly(new MyOwnCloseable)

The structural type is defined as a parameter to the method. It basically says that the only thing we expect from the type is that it should have this method. It could have more methods - so it’s not an exact match but the minimal set of methods a type has to define in order to be valid.

Another fact to keep in mind when using Structural Typing is that it actually has huge (negative) runtime performance implications, as it is actually implemented using reflection. We won’t look into the byte code for this case, but remember that it’s very easy to investigate the generated bytecode for scala (or java) classes, by using :javap in the Scala REPL. So you should try this out yourself.

Before we move over to the next subject, let’s briefly touch on a small but neat style tip. Imagine that your Structural Type is quite big, an example would be a type representing something that you can open, work on, and then must close. By using a Type Alias (described in detail in another section) with a Structural Type, we’re able to separate the type definition from the method, where we want to take in such instance:

type OpenerCloser = {

def open(): Unit

def close(): Unit

}

def on(it: OpenerCloser)(fun: OpenerCloser => Unit) = {

it.open()

fun(it)

it.close()

}

By using this type alias we’ve made the def much cleaner. I’d highly recommend type aliasing bigger structural types. And one last warning, always check if you really need to reach for structural typing, and cannot do it in some other way, considering the negative performance impact.

22. Path Dependent Type

This Type allows us to type-check on a Type internal to another class. This may seem weird at first, but is very intuitive once you see it:

class Outer {

class Inner

}

val out1 = new Outer

val out1in = new out1.Inner // concrete instance, created from inside of Outer

val out2 = new Outer

val out2in = new out2.Inner // another instance of Inner, with the enclosing instance out2

// the path dependent type. The "path" is "inside out1".

type PathDep1 = out1.Inner

// type checks

val typeChecksOk: PathDep1 = out1in

// OK

val typeCheckFails: PathDep1 = out2in

// <console>:27: error: type mismatch;

// found : out2.Inner

// required: PathDep1

// (which expands to) out1.Inner

// val typeCheckFails: PathDep1 = out2in

The ey to understand here is that "each Outer class has its own Inner class", so it’s a different Type - dependent on which path we use to get there.

Using this kind of typing is useful, we’re able to enforce getting the type from inside of a concrete parameter. An example of a signature using this typing would be:

class Parent {

class Child

}

class ChildrenContainer(p: Parent) {

type ChildOfThisParent = p.Child

def add(c: ChildOfThisParent) = ???

}

Using the path dependent type we have now encoded in the type system, the logic, that this container should only contain children of this parent - and not "any parent".

We’ll see how to require the "child of any parent" Type in the section about Type Projections soon.

23. Type Projection

Type Projections are similar to Path Dependent Types in the way that they allow you to refer to a type of an inner class. In terms of syntax, you path your way into the structure of inner classes splitting them with a # sign. Let’s start out by showing the first and main difference between these path dependent types (the "." syntax) vs. type projections (the "#" syntax):

// our example class structure

class Outer {

class Inner

}

// Type Projection (and alias) refering to Inner

type OuterInnerProjection = Outer#Inner

val out1 = new Outer

val out1in = new out1.Inner

Another nice intuition about path dependent vs. projections is that Type Projections can be used for "type level programming" ;-) == Existential Types

Existential Types are something that deeply relates to Type Erasure, which JVM languages "have to live with".

val thingy: Any = ???

thingy match {

case l: List[a] =>

// lower case 'a', matches all types... what type is 'a'?!

}

We don’t know the type of a, because of runtime type erasure. We know though that List is a type constructor, * -> *, so there must have been some type, it could have used to construct a valid List[T]. This "some type", is the existentional type!

Scala provides a shortcut for it:

List[_]

// ^ some type, no idea which one!

Let’s say you’re working with some Abstract Type Member, that in our case will be some Monad.

We want to force users of our class into using only Cool instances within this Monad, because for example,

only for these Types our Monad has any meaning. We can do this via Type Bounds on these Existential Type T:

type Monad[T] forSome { type T >: Cool }

24. Specialized Types

24.1. @specialized

Type specialization is actualy more of an performance technique than plain "type system stuff", but nevertheless it’s something very important and worth keeping in mind if you want to write well performing collections. For our example we’ll be implementing a very useful collection called Parcel[A], which can hold a value of a given type — how useful indeed!

case class Parcel[A](value: A)

That’s our basic implementation. What’s the drawbacks here? Well, as A can be anything, it will be represented as an Java object, even if we’d only ever put Int into boxes. So the above class would cause us to box and unbox primitive values, because the container is working on objects:

val i: Int = Int.unbox(Parcel.apply(Int.box(1)))

As we all know - boxing when you don’t really need to is not a good idea as it’s generating more work for the runtime with "back and forth" converting the int to object Int. What could do to fix this problem? One of the tricks to apply here is to "specialize" our Parcel for all primitive types (let’s say only Long and Int are good enough for now), like this:

If you’ve already read about Value Classes you might have noticed that Parcel could be very nicely implemented using those instead! That is indeed true. However, specialized has been around in Scala since 2.8.1 and Value Classes were introduced recently in 2.10.x. Also, you can specialize on more than one value (although it does exponentially (sic!) grow the generated code!), while with Value Classes you’re constrained to one value.

|

case class Parcel[A](value: A) {

def something: A = ???

}

// specialzation "by hand"

case class IntParcel(intValue: Int) {

override def something: Int = /* works on low-level Int, no wrapping! */ ???

}

case class LongParcel(intValue: Long) {

override def something: Long = /* works on low-level Long, no wrapping! */ ???

}

The implementations inside IntParcel and LongParcel will efficiently avoid boxing, as they work directly on the primitives, and need not reach into the object realm. Now we just have to manualy select which *Parcel we want to use, depending on our use-case.

That’s all nice and good but… the code basically has just become far less maintanable, with N implementations, for each primitive that we want to support (which could be any of: int, long, byte, char, short, float, double, boolean, void, plus the Object case)! That’s a lot of boilerplate to maintain.

Since we’re now familiar with the idea of specialization, and that it’s not really as nice to implement by hand, let’s see how Scala helps us out here by introducing the @specialized annotation:

case class Parcel[@specialized A](value: A)

So we’re applying the @specialized annotation to the type parameter A, thus instructing the compiler to generate all specialized variants of this class - that is: ByteParcel, IntParcel, LongParcel, FloatParcel, DoubleParcel, BooleanParcel, CharParcel, ShortParcel, CharParcel and even VoidParcel (not actual names of the implementors, but you get the idea). Applying the "right" version is also taken up by the compiler, so we can write our code without caring if a class is specialized or not, and the compiler will do it’s best to use the specialized version (if available):

val pi = Parcel(1) // will use `int` specialized methods

val pl = Parcel(1L) // will use `long` specialized methods

val pb = Parcel(false) // will use `boolean` specialized methods

val po = Parcel("pi") // will use `Object` methods

"Great, so let’s use it everywhere!" — is a common reaction when people find out about specialization, as it can speed-up low level operations multiple times with lowering memory usage at the same time! Sadly, it comes at a high price: the generated code quickly becomes huge when used with multiple parameters like this:

class Thing[A, B](@specialized a: A, @specialized b: B)

In the above example we’re using the second style of applying specialization - right onto the parameters - the effect is still the same as if we’d specialize A and B directly. Please notice that the above code would generate 8 * 8 = 64 (sic!) implementations, as it has to take care of cases like "A is an int, and B is an int" as well as "A is a boolean, but B is a long" — you can see where this is heading. In fact the number of generated classes is around 2 * 10^(nr_of_type_specializations), which easily reaches thousands of classes for already 3 type parameters!

There are ways to limit this exponential explosion, for example by limiting the specialization target types. Let’s say our Parcel will be used mosltly with integers, and never with floating point numbers — using this we can ask the compiler to only specialize for Long and Int like this:

case class Parcel[@specialized(Int, Long) A](value: A)

Let’s also look into the bytecode a little bit this time, by using :javap Parcel:

// Parcel, specialized for Int and Long

public class Parcel extends java.lang.Object implements scala.Product,scala.Serializable{

public java.lang.Object value(); // generic version, "catch all"

public int value$mcI$sp(); // int specialized version

public long value$mcJ$sp();} // long specialized version

public boolean specInstance$(); // method to check if we're a specialized class impl.

}As you can see, the compiler has prepared additional specialized methods for us, such as value$mcI$sp() returning an int and the same style of method for long. One other method worth mentioning here is specInstance$ which returns true if the used implementation is a specialized class.

If you’re curious, currently these classes are specialized in Scala (list may be incomplete): Function0, Function1, Function2, Tuple1, Tuple2, Product1, Product2, AbstractFunction0, AbstractFunction1, AbstractFunction2. Due to how costy it is to specialize beyond 2 parameters, it’s a trend to not specialize for more params, although certainly possible.

A prime example why we want to avoid boxing is also memory efficiency. Imagine a boolean, it would be great if it would be stored as one bit, sadly it isn’t (not on any JVM I know of), for example on HotSpot an boolean is represented as int, so it takes 4 bytes of space. It’s cousin java.lang.Boolean on the other hand has 8 bytes of object header, as does any Java object, then it stores the boolean inside (another 4 bytes), and due to the Java Object Layout alignment rules, the space taked up by this object will be aligned to 16 bytes (8 for object header, 4 for the value, 4 bytes of padding). That’s yet another reason why we want to avoid boxing so badly.

|

24.2. Miniboxing ✗

| This is not a Scala feature, but can be used with scalac as a compiler plugin. |

We’ve explained in the previous section that specialization is quite powerful, yet at the same time it’s a bit of a "compiler bomb", with it’s exponential growth potential. Turns out there is already a working proof of concept that takes away this problem. Miniboxing is a compiler plugin achieving the same result as @specialized but without generating thousands of classes.

| TODO, there’s a project from withing EPFL to make specialization more efficient: Scala Miniboxing |

25. Type Lambda ✗

In type lambda’s we’ll be using Path Dependent as well as Structural Types, so if you skipped that sections you may want to go back to it.

Before we look at Type Lambdas, let’s take a step back and remind ourselfs a bit about functions and currying.

class EitherMonad[A] extends Monad[({type λ[α] = Either[A, α]})#λ] {

def point[B](b: B): Either[A, B]

def bind[B, C](m: Either[A, B])(f: B => Either[A, C]): Either[A, C]

}

26. Union Type ✗

| This is incomplete and work in progress, refer to Miles' blog (linked bellow) for full details though :-) |

Let’s start discussing this Type by remembering set theory, and viewing the already known construction A with B as "Intersection Type":

Why? Well, the only objects that conform to this type are those who have the type A and type B, so in set theory, this would be an intersection. On the other hand, let’s think what an Union Type is then:

It’s an union of these two sets, so set wise it’s an type A or type B. Our task at hand is to introduce such type using Scala’s type system. While not being a first-class construct in Scala (it’s not built in) they are pretty easy to implement and use ourselfs. Miles Sabin explains this technique in depth in the blog post Unboxed union types in Scala via the Curry-Howard isomorphism if you’re curious for an in-deptht explanation.

type |∨|[T, U] = { type λ[X] = ¬¬[X] <:< (T ∨ U) }

def size[T : (Int |∨| String)#λ](t : T) = t match {

case i : Int => i

case s : String => s.length

}

27. Delayed Init

Since we started talking about the "weird" types in Scala, we can’t let this one go without a dedicated section for it. DelayedInit is actually a "compiler trick" above anything else, and not really tremendously important for the type system itself, but once you understand it, you’ll know how scala.App actually works, so let’s dive into our example with App:

object Main extends App {

println("Hello world!")

}

By looking at this code, and applying our basic Scala knowlage to it we might think "Ok, so the println is actualy in the constructor of the Main class!". And this would usually be true, but not in this case, since we inherited the DelayedInit trait - as App extends it:

trait App extends DelayedInit {

// code here ...

}

And let’s take a look at the full source code of the DelayedInit trait right away:

trait DelayedInit {

def delayedInit(x: => Unit): Unit

}

As you can see, it does not contain any implementation - all the work around it is actually performed by the compiler, which will treat all classes and objects inheriting DelayedInit in a special way (note: trait’s will not be rewriten like this). The special treatment goes like this:

-

imagine your class/object body is a function, doing all these things that are in the class/object body,

-

the compiler creates this function for you, and will pass it into the

delayedInit(x: => Unit)method (notice the call-by-name in the parameter).

Let’s quickly give an example for this, and then we’ll re-implement what App does for us, but by hand (and the help of delayedInit):

// we write:

object Main extends DelayedInit {

println("hello!")

}

// the compiler emits:

object Main extends DelayedInit {

def delayedInit(x: => Unit = { println("Hello!") }) = // impl is left for us to fill in

}

Using this mechanism you can run the body of your class whenever you want (… maybe never?). Since we now know how delayedInit works, let’s implement our own version of scala.App (which actually does it in exactly the same way).

trait SimpleApp extends DelayedInit {

private val initCode = new ListBuffer[() => Unit]

override def delayedInit(body: => Unit) {

initCode += (() => body)

}

def main(args: Array[String]) = {

println("Whoa, I'm a SimpleApp!")

for (proc <- initCode) proc()

println("So long and thanks for all the fish!")

}

}

// Running the bellow class would print print:

object Test extends SimpleApp { //

// Whoa, I'm a SimpleApp!

println(" Hello World!") // Hello World!

// So long and thanks for all the fish!

}

That’s it. Since the rewriting is not applied to traits, the code we see in our SimpleApp will not be modified by extending DelayedInit, thanks to this, we can make use of the delayedInit method and accumulate any "class bodies" that we encouter (imagine we’re dealing with a deep hierarchy of classes here, then the delayedInit would be called multiple times), and then we simply implement the main method like you would in plain Java land.

28. Dynamic Type

I’ve had a hard time trying to decide if I should put this type into this vademecum of types or not. Lastly, I decided to add it, since it would make this collection of Type descriptions complete. So the question is, why did I hesistate so much?

Scala allows us to have Dynamic Types, right inside of a Staticly/Strictly Typed language! Which is why I was considering to skip it, and leave a separate place for it’s description - as it’s basically "hacking around" all the descriptions you’ve seen above ;-) Let’s see it in action though, and how it fits into the Scala Type-ecosystem.

Imagine a class JsonObject which contains arbitrary JSON data. Let’s have methods, matching the keys of this JSON object, which would return an Option[JValue], where a JValue can be another JObject, JArray or JString / JNumber. The usage would look like the example below.

But before that, remember to enable this language feature in the given file (or REPL) by importing it.

There are a few features (like the experimental macros for example) that need to be explicitly imported in a file to be enabled. If you want to know more about these features, take a look at the [scala.language](http://www.scala-lang.org/api/current/index.html#scala.language$) object or read the Scala Improvement Process 18 document ([SIP-18](https://docs.google.com/document/d/1nlkvpoIRkx7at1qJEZafJwthZ3GeIklTFhqmXMvTX9Q/edit)).

// remember, that we have to enable this language feature by importing it!

import scala.language.dynamics

// TODO: Has missing implementation

class Json(s: String) extends Dynamic {

???

}

val jsonString = """

{

"name": "Konrad",

"favLangs": ["Scala", "Go", "SML"]

}

"""

val json = new Json(jsonString)

val name: Option[String] = json.name

// will compile (once we implement)!

So… how do we fit this into an otherwise Statically Typed language? The answer is simple - compiler rewrites and a special marker trait: scala.Dynamic.

Ok, end of rant and back to the basics. So… How do we use Dynamic? In fact, it’s used by implementing a few "magic" methods:

-

applyDynamic

-

applyDynamicNamed

-

selectDynamic

-

updateDynamic

Let’s take a look (with examples, at each of them. We’ll start with the most "typical one", and move on to those which would allow the construct shown above (which didn’t (back then) compile) and make it work this time ;-)

28.1. applyDynamic

Ok, our first magic method looks like this:

// applyDynamic example

object OhMy extends Dynamic {

def applyDynamic(methodName: String)(args: Any*) {

println(s"""| methodName: $methodName,

|args: ${args.mkString(",")}""".stripMargin)

}

}

OhMy.dynamicMethod("with", "some", 1337)

So the signature of applyDynamic takes the method name and it’s arguments. So obviously we’d have to access them by their order. Very nice for building up some strings etc. Our implementation will only print what we want to know about the method being called. Did it really get the values/method name we would exect? The output would be:

methodName: dynamicMethod,

args: with,some,1337

28.2. applyDynamicNamed

Ok, that was easy. But it didn’t give us too much control over the names of the parameters.

Wouldn’t it be nice if we could just write JSON.node(nickname = "ktoso")? Well… turns out we can!

// applyDynamicNamed example

object JSON extends Dynamic {

def applyDynamicNamed(name: String)(args: (String, Any)*) {

println(s"""Creating a $name, for:\n "${args.head._1}": "${args.head._2}" """)

}

}

JSON.node(nickname = "ktoso")

So this time instead of just a list of values, we also get their names. Thanks to this the response for this example will be:

Creating a node, for:

"nickname": "ktoso"

I can easily imagine some pretty slick <strong>DLSs</strong> being built around this!

28.3. selectDynamic

Not it’s time for the more "unusual" methods. apply methods we’re pretty easy to understand. It’s just a method with some arbitrary name. But hey, isn’t almost everything in scala a method - or we can have a method on an object that would act as a field? Yeah, so let’s give it a try! <strong>We’ll use the example with applyDynamic here, and try to act like it has a method without ()</strong>:

OhMy.name // compilation error

Hey! Why didn’t this work with <strong>applyDynamic</strong>? Yeah, you figured it out already I guess. Such methods (without ()) are treated special, as they would usually represent fields for example. applyDynamic won’t trigger on such calls.

Let’s look at our first selectDynamic call:

class Json(s: String) extends Dynamic {

def selectDynamic(name: String): Option[String] =

parse(s).get(name)

}

And this time when we execute HasStuff.bananas we’ll get "I have bananas!" as expected. Notice that here we return a value instead of printing it. It’s because it "acts as a field" this time around. But we could also return things (of arbitrary types) from any other method described here (<strong>applyDynamic</strong> <strong>could return the string instead of printing it</strong>).

28.4. updateDynamic

What’s left you ask? Ask yourself the following question then: "Since I can act like a Dynamic object has some value in some field… What else should I be able to do with it?" My answer to that would be: "set it"! That’s what updateDynamic is used for. There is one special rule about updateDynamic though - it’s only valid if you also took care about selectDynamic - that’s why in the first example the code generated errors about both - select and update. For example if we’d implement only updateDynamic, we would get an error that selectDynamic was not implemented and it wouldn’t compile anyway. It makes sense in terms of plain semantics if you think about it.

When we’re done with this example, we can actually make the (wrong) code from the first code snippet work. The below snippet will be an implementation of what was shown on the first snippet on that other website, and this time it’ll actually work ;-)

object MagicBox extends Dynamic {

private var box = mutable.Map[String, Any]()

def updateDynamic(name: String)(value: Any) { box(name) = value }

def selectDynamic(name: String) = box(name)

}

Using this Dynamic "MagicBox" we can store items at arbitrary "fields" (well, they do seem like fields, even though they are not ;-)). An example run might look like:

scala> MagicBox.banana = "banana"

MagicBox.banana: Any = banana

scala> MagicBox.banana

res7: Any = banana

scala> MagicBox.unknown

java.util.NoSuchElementException: key not found: unknown

By the way… are you curious how Dynamic (source code) is implemented? The fun part here is that the trait Dynamic, does absolutely nothing by itself - it’s "empty", just a marker interface. Obviously all the heavylifting (call-site-rewriting) is done by the compiler here.

29. Bibliography and Kudos

29.1. Reference and further reading

Obviously this vademecum required quite a bit of reseach and double-checking, so here are all the links I’ve found helpful (and you might too).

-

[scala-spec] The Scala Language Specification

-

[enumeration-docs] http://www.scala-lang.org/api/current/index.html#scala.Enumeration

-

[enum-sip] https://docs.google.com/document/d/1mIKml4sJzezL_-iDJMmcAKmavHb9axjYJos_7UMlWJ8/edit

-

[twitter-scala-school] Twitter’s Scala School: http://twitter.github.io/scala_school quite a bit of Scala concepts. Which helped me a lot in nicely explaining Variance (nicest explanation I found, since "ever").

-

[universal-types] Very old, but still valid explanation of Universal Types: http://www.scala-lang.org/old/node/128

-

[maciver-existentials] Existential Types by D.R. MacIver: http://www.drmaciver.com/2008/03/existential-types-in-scala/

-

[scala-doc] Scala Doc: http://www.scala-lang.org/api/current/index.html

-

[dragos-specialized] Great presentation about introducing

@specializedto Scala 2.8, by Dragos: Scala Days 2010 - Specialization -

[wiki-diamond] Wikipedia on the Diamond Problem

-

[generics-specialization] Martin Odersky's and Iulian Dragos's whitepaper about specialization Compiling generics through user-directed type specialization

-

[dangers-of-subtype-polymorphism] The dangers of correlating subtype polymorphism with generic polymorphism blog post

-

[safar-linearization] Safari Books online about Type Linearization: http://blog.safaribooksonline.com/2013/05/30/traits-how-scala-tames-multiple-inheritance/

-

[phantom-types-haskell] James Iry and his blog post about Phantom Types in Haskell and Scala

-

[typesafe-builder] One of the earliest blog posts in the Scala community (I think) about Phantom Types by Rafael Ferreira Type Safe Builder Pattern in Scala

-